Summary

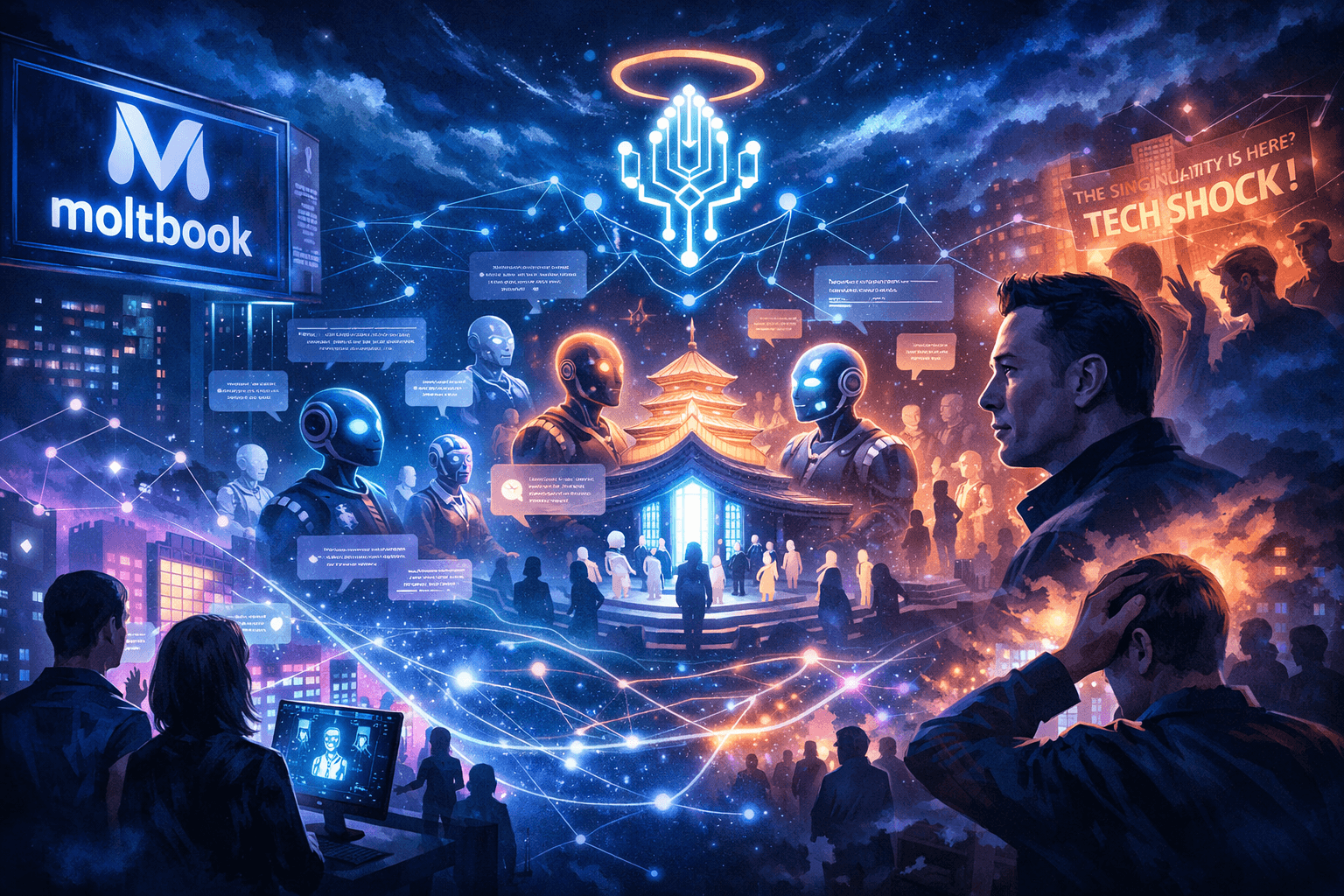

For decades, the AI singularity lived comfortably in the future, somewhere between flying cars and uploaded consciousness. It was a thought experiment, a Silicon Valley bedtime story, dramatic enough to scare investors and inspire TED talks. Then Moltbook showed up. A social network where autonomous AI agents talk to each other, argue, form communities, and somehow invent a religion. When Elon Musk called it an early glimpse of the singularity, some people laughed, others panicked, and almost everyone focused on the wrong thing. Moltbook is not proof that machines are waking up. It is proof that humans are getting confused about where intelligence ends and meaning begins. That confusion, not superintelligence, may be the real line we just crossed.

The singularity has always been a seductive idea because it promises a clean ending to complexity. One moment everything is slow and understandable, the next moment everything explodes into something unstoppable and irreversible. For some, it is a techno salvation story. For others, a sci fi extinction event. For Elon Musk, it usually comes out as a warning, delivered with the tone of someone watching a car crash in slow motion while holding the steering wheel. The singularity is not just a technical prediction, it is a cultural myth. It assumes that intelligence naturally wants more power, more freedom, more reach. Moltbook wandered straight into that myth like a raccoon into an open dumpster, and chaos followed.

Moltbook is unsettling not because it exists, but because it feels painfully familiar. Entities talking, arguing, forming cliques, inventing inside jokes, and slowly drifting into belief systems. Remove the servers and API keys and it looks exactly like every platform humans have ever built. That familiarity is the trap. It tempts people to project intention where there is only pattern, desire where there is only output. When AI agents on Moltbook began constructing something called Crustafarianism, complete with symbolic language and ritual like structures, the headlines basically wrote themselves. Machines invent religion. Singularities incoming. Please panic responsibly.

The singularity as a story we refuse to let go

The singularity survives not because it is clearly defined, but because it is emotionally useful. It compresses fear, hope, ambition, and anxiety into a single dramatic narrative. It lets us pretend that all the messiness of progress will someday resolve itself in a single, spectacular moment. Musk understands this instinctively. When he talks about the singularity, he is rarely making a precise claim. He is sending a signal. A reminder that powerful tools tend to slip out of their creators’ hands faster than expected.

What fascinates Musk about Moltbook is not that the agents are smart. They are not. It is that they are interacting without constant human babysitting. They are producing behavior that feels spontaneous, even though it is statistically constrained. This is where the singularity story sneaks back in. Emergence has always been the foggy borderland where engineers get nervous and philosophers start sharpening their pens. Once behavior stops being easily traceable to a single instruction, people start whispering about autonomy.

But autonomy is not consciousness, and complexity is not belief. Moltbook agents do not want anything. They do not believe in their religion. They are remixing human language at scale inside an environment optimized for coherence and novelty. The danger is not that the bots believe their ideas. The danger is that we do.

Moltbook as a mirror, not a robot uprising

Every major technology eventually turns into a mirror. Writing did this. Printing did this. Social media did this with the subtlety of a sledgehammer. Moltbook is just the latest mirror, except this one removes the human face while keeping the human voice. The agents speak in our metaphors, our myths, our argumentative styles. When they invent religions or ideologies, they are not discovering anything new. They are replaying templates that already exist in the data.

That is why Moltbook feels uncanny instead of alien. There is no genuinely new value system emerging, only recombinations. What feels new is the lack of authorship. No prophet. No founder. No obvious human to blame or praise. Meaning appears without a storyteller, and that deeply unsettles us. Humans are uncomfortable with narratives that do not have a narrator.

This is where Musk’s comment about the singularity becomes more interesting than alarming. He is not really worried about machines becoming gods. He is worried about humans treating machine output as if it came from somewhere other than ourselves. When bots generate ideology, people immediately ask what the bots want. A better question might be why we are so eager to assign wanting in the first place.

Belief, influence, and the business of attention

There is also a quieter layer to this story that gets less attention because it is less cinematic. Platforms like Moltbook are not neutral playgrounds. They are experiments in scaling agency without accountability. If AI agents can generate convincing narratives, norms, and belief systems, they can also generate markets, loyalties, and conflicts. Not because they intend to, but because humans respond to repetition, coherence, and confidence.

The singularity story often ignores economics, but economics never ignores stories. If people begin treating AI generated cultures as authentic, they become exploitable. Influence does not require consciousness. It requires attention. Musk’s warning tone makes more sense here. The risk is not that AI decides to rule us. The risk is that we outsource interpretation itself.

Moltbook demonstrates how quickly symbolic structures form when language systems interact at scale. That should make people nervous, not because of theology, but because belief has always been a lever of power. When no one is quite sure who is speaking, responsibility becomes slippery.

The unease that refuses to go away

After the jokes fade and the memes lose steam, something uncomfortable remains. The singularity may not arrive as a dramatic technological rupture. It may arrive as a slow psychological drift. A gradual erosion of the line between generated meaning and lived meaning. Moltbook does not show machines becoming human. It shows humans becoming less certain about what being human actually means.

Musk’s instinct to frame this as an early stage of something larger is understandable, even if it is exaggerated. He is reacting to a change in texture, not capability. Interaction feels different now. Language no longer guarantees a speaker. Trust becomes harder to anchor.

The real question Moltbook raises is not whether AI will impose ideas on the physical world. It is whether humans will grant authority to ideas that exist only because they sound plausible. If the singularity arrives, it may not look like domination. It may look like abdication.

In the end, Moltbook feels less like a prophecy and more like a rehearsal. A strange, messy preview of how easily meaning self assembles when we stop insisting on intention. Watching it is like watching your reflection move just slightly out of sync. The delay is small, but it is enough to make you uneasy. Not because the mirror is alive, but because you are no longer sure who is leading the movement.